|

|

|

|

Home |

The Mil & Aero Blog

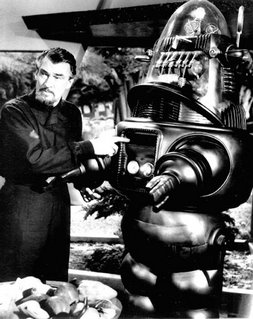

Posted by John Keller Lots of people have felt queasy about monster robots ever since they saw that cinematic classic Forbidden Planet, made half a century ago with its mechanical star Robby the Robot. The I, Robot movie with Will Smith a few years ago didn't make these folks feel much better. I admit that it can be fun to see creepy robots at the movies, but I think it's important to acknowledge a clear difference between monster movie robots and today's generation of unmanned vehicles. Autonomous vehicles such as the U.S. MQ-1 Predator and MQ-9 Reaper unmanned aerial vehicles can do destructive things -- like finding and killing terrorists before they can kill coalition soldiers. It doesn't necessarily follow that since these machines can do destructive things, that they post unacceptable risks to humanity. Someone should tell this to a university professor in Sheffield, England, named Noel Sharkey, who in a keynote address to the Royal United Services Institute (RUSI) is warning of the threat posed to humanity by new robot weapons being developed by powers worldwide. A University of Sheffield news release, headlined Killer military robots pose latest threat to humanity, lays out Professor Sharkey's case, which essentially boils down to these points: -- we are beginning to see the first steps towards an international robot arms race; -- robots may become standard terrorist weapons to replace the suicide bomber; -- the United States is giving priority to robots that will decide on where, when, and whom to kill; -- robots are easy for terrorists to copy and manufacture; -- robots have difficulty discriminating between combatants and innocents; and -- it's urgent for the international community to assess the risks of robot weapons. It's pretty easy to see where this line of reasoning is going: robots can do bad things, therefore we ought to consider banning them. I just don't follow the logic. It's the same argument as the one for banning guns, which basically says that guns kill; ban guns and nobody gets killed. That argument obviously doesn't wash. Banning guns succeeds only in disarming law-abiding citizens, and leaving them at the mercy of criminals who don't particularly care if there's a law prohibiting them from having guns or not. The bad guys get guns no matter what. It's the same thing with autonomous vehicles. Ban them, and only the criminal terrorists will have them. Law-abiding countries would be at a disadvantage. Let's look at Professor Sharkey's line of reasoning point by point. Let's consider that we could be in the beginning of an international robot arms race. No way to prevent this, and so what if we are? No weapon has ever been invented that wasn't put to use. Trying to prevent the spread of robot technology would be like trying to prevent the spread of the automobile. People want 'em, they'll get 'em. Second point: robots may become terrorist weapons to replace the suicide bomber. Let's remember that Muslim suicide bombers get 72 virgins in heaven if they succeed in blowing themselves up. I doubt if they'll want to share those virgins with robots. Next point: the U.S. is giving priority to robots that will decide on where, when, and whom to kill. Little chance of this. The so-called doctrine of "man in the loop" is part of the fabric of military procedures. People make the kill decisions, not the robots. Besides, everyone these days is scared to death of lawsuits. No robot manufacturer or user would allow his machines to have sole control over life-and-death decisions. How about the claim that robots have difficulty discriminating between combatants and innocents? See paragraph above. Let's consider that robots are easy for terrorists to copy and manufacture. Okay, so are guns, knives, personal computers, and can openers. Look what the terrorists have done with improvised explosive devices. They're pretty good at it, but U.S. forces and their allies are dealing with this threat. Plus, anything the terrorists build, we can build better. Let the robot races begin! Last point: it's urgent for the international community to assess the risks of robot weapons. Get the U.N. involved in autonomous vehicle policy? No, thanks. I spent 10 years as a reporter in Washington watching the federal government screw up nearly everything it touched. The U.N. is even worse. With apologies to Professor Sharkey, I think we should tend to our robots, and let the other guys tend to theirs. << Home |

Welcome to the lighter side of Military & Aerospace Electronics. This is where our staff recount tales of the strange, the weird, and the otherwise offbeat. We could put news here, but we have the rest of our Website for that. Enjoy our scribblings, and feel free to add your own opinions. You might also get to know us in the process. Proceed at your own risk.

John Keller is editor-in-chief of Military & Aerospace Electronics magazine, which provides extensive coverage and analysis of enabling electronic and optoelectronic technologies in military, space, and commercial aviation applications. A member of the Military & Aerospace Electronics staff since the magazine's founding in 1989, Mr. Keller took over as chief editor in 1995.  Courtney E. Howard is senior editor of Military & Aerospace Electronics magazine. She is responsible for writing news stories and feature articles for the print publication, as well as composing daily news for the magazine's Website and assembling the weekly electronic newsletter. Her features have appeared in such high-tech trade publications as Military & Aerospace Electronics, Computer Graphics World, Electronic Publishing, Small Times, and The Audio Amateur.

Courtney E. Howard is senior editor of Military & Aerospace Electronics magazine. She is responsible for writing news stories and feature articles for the print publication, as well as composing daily news for the magazine's Website and assembling the weekly electronic newsletter. Her features have appeared in such high-tech trade publications as Military & Aerospace Electronics, Computer Graphics World, Electronic Publishing, Small Times, and The Audio Amateur.

John McHale is executive editor of Military & Aerospace Electronics magazine, where he has been covering the defense Industry for more than dozen years. During that time he also led PennWell's launches of magazines and shows on homeland security and a defense publication and website in Europe. Mr. McHale has served as chairman of the Military & Aerospace Electronics Forum and its Advisory Council since 2004. He lives in Boston with his golf clubs.

John McHale is executive editor of Military & Aerospace Electronics magazine, where he has been covering the defense Industry for more than dozen years. During that time he also led PennWell's launches of magazines and shows on homeland security and a defense publication and website in Europe. Mr. McHale has served as chairman of the Military & Aerospace Electronics Forum and its Advisory Council since 2004. He lives in Boston with his golf clubs.

Previous Posts

Archives

|

|||||

Internet gems

THE MAE WEBSITE AUTHORS ARE SOLELY RESPONSIBLE FOR THE CONTENT AND ACCURACY OF THEIR BLOGS, INCLUDING ANY OPINIONS THEY EXPRESS, AND PENNWELL IS NOT RESPONSIBLE FOR AND HEREBY DISCLAIMS ANY AND ALL LIABILITY FOR THE CONTENT, ITS ACCURACY, AND OPINIONS THAT MAY BE CONTAINED HEREIN. THE CONTENT ON THE MAE WEBSITE MAY BE DATED AND PENNWELL IS UNDER NO OBLIGATION TO PROVIDE UPDATES TO THE INFORMATION INCLUDED HEREIN.

|

||||||

|

|

Home | About Us | Contact Us | Corporate Website | Privacy Policy | Courage and Valor Foundation | Site Map

Also Visit: Laser Focus World | Vision Systems Design | Industrial Laser Solutions Copyright © 2007: PennWell Corporation, Tulsa, OK; All Rights Reserved. | Terms & Conditions | Webmaster |

David Brockman, Utilytech Corp

Wednesday, March 19, 2008 4:24:00 PM EDT

I do not have a problem with "a man in the loop" robots. It is dumb autononomous robots that I am concerned about. I am not policically active and I am not anti-military. Laying my cards face up on the table, my position is simply that is the duty and moral responsibility of all citizens to protect innocent people everywhere regardless of creed, race or nationality. All innocents have a right to protection.

There are many breaches of this right that fall outside of my remit. I concern myself solely with new weapons that are being constructed using research from my own field of enquiry.

My concerns arise from my knowledge of the limitations of Artificial Intelligence and robotics. I am clearly not calling for a ban on all robots as I have worked in the field for nearly 30 years.

I don't blame you for not believing me about the use of fully autonomous battle robots. I found it hard to believe myself until I read the plans and talked to military officers about them. Just google search for military roadmaps to help you get up to speed on this issue. Read the roadmaps for Airforce, Army and Navy published in 2005 or the December, 2007 Unmanned Systems Roadmap 2007-2032 and you might begin to see my concerns.

In a book published by The National Academies Press in 2005, a Naval committee wrote that, “The Navy and Marine Corps should aggressively exploit the considerable warfighting benefits offered by autonomous vehicles (AVs) by acquiring operational experience with current systems and using lessons learned from that experience to develop future AV technologies, operational requirements, and systems concepts.”

The signs are there that such plans are falling into place.. On the ground, DARPA, the US Defence Agency, ran a successful Grand Robotics challenge for four years in an autonomous vehicle race across the Mohave Desert. In 2007 it changed to an urban challenge where autonomous vehicles navigated around a mock city environment. You don’t have to be too clever to see where this is going. In February this year DARPA showed off their “Unmanned Ground Combat Vehicle and Perceptor Integration System” otherwise knows as the Crusher. This a 6.5 ton robot truck, nine feet wide with no room for passengers or a steering wheel. It travels at 26 mph and Stephen Welby, director of DARPA Tactical Technology office said, “This vehicle can go into places where, if you were following in a Humvee, you’d come out with spinal injuries,” Carnegie Mellon University Robotics Institute is reported to have received $35 million over four years to deliver this project. Admittedly it is a just a demonstration project at present.

Last month BAE tested software for a sqaudron of planes that could select their own targets and decide among themselve which each should acquire.Again a demonstrations system but the signs are there.

There are a number of good military reasons for such a move. Teleoperated systems are expensive to manufacture and require many support personnel to run them. One of the main goals of the Future Combat Systems project is to use robots as a force multiplier so that one soldier on the battlefield can be a nexus for initiating a large scale robot attack from the ground and the air. Clearly one soldier cannot operate several robots alone. Autonomous systems have the advantage of being able to make decisions in nanoseconds while humans need a minimum of hundreds of milliseconds.

FCS spending is going to be in the order of $230 billion dollars with spending on unmanned systems expected to exceed $24 billion ($4 billion up to 2010).

The downside is that autonomous robots that are allowed to make decisions about who to kill falls foul of the fundamental ethical precepts of a just war under jus in bello as enshrined in the Geneva and Hague conventions and the various protocols set up to protect innocent civilians, wounded soldiers, the sick, the mentally ill, and captives.

There is no way for a robot or artificial intelligence system to determine the difference between a combatant and an innocent civilian. There are no visual or sensing systems up to that challenge. The Laws of War provide no clear definition of a civilian that can be used by a machine. The 1944 Geneva Convention requires the use of common sense while the 1977 Protocol 1 (Article 50) essentially defines a civilian in the negative sense as someone who is not a combatant. Even if there was a clear definition and even if sensing was possible, human judgment is required for the infinite number of circumstances where lethal force is inappropriate. Just think of a child forced to carry an empty rifle.

The Laws of War also require that lethal force be proportionate to military advantage. Again there is no sensing capability that would allow a robot such a determination and nor is there any known metric to objectively measure needless, superfluous or disproportionate suffering. It requires human judgment. Yes humans do make errors and can behave unethically but they can be held accountable. Who is to be held responsible for the lethal mishaps of a robot? Certainly not the machine itself. There is a long causal chain associated with robots: the manufacturer, the programmer, the designer, the department of defense, the generals or admirals in charge of the operation and the operator.

This is where your analogy with gun conrol breaks down. If someone kills an innocent with a gun, they are responsible for the crime. The gun does not decide who to kill. If criminals decided to put guns on robots that wandered around shooting innocent people, do you thing that the good citizens should also put guns on robots to go around shooting innocent people? It does not make sense.

The military forces in the civilized world do not want to kill civilians. There are stong legal procedures in the US and JAG has to validate all new weapons. My worry is that there will be a gradual sleep walk into the use of autonomous weapons like the ones that I mentioned. I want us to step back and make the policies rather than let the policies make themselves.

I take you point (and greater expertise than mine) about the slowness of politics and the UN. But we must try. There are no current international guidelines or even discussions about the uses of autonomous robots in warfare. These are needed urgently. The present machines could be little more than heavily armed mobile mines and we have already seen the damage that landmines have caused to children at play. Imagine the potential devastation of heavily armed robots in a deep mission out of radio communication. The only humane recourse of action is to severely restrict or ban the deployment of these new weapons until it can be demonstrated that they can pass an “innocents discrimination test” in real life circumstances.

I am pleased that you have allowed this opportunity to debate the issues and put my viewpoint forward.

best wishes,

Noel

Wednesday, March 26, 2008 5:44:00 AM EDT